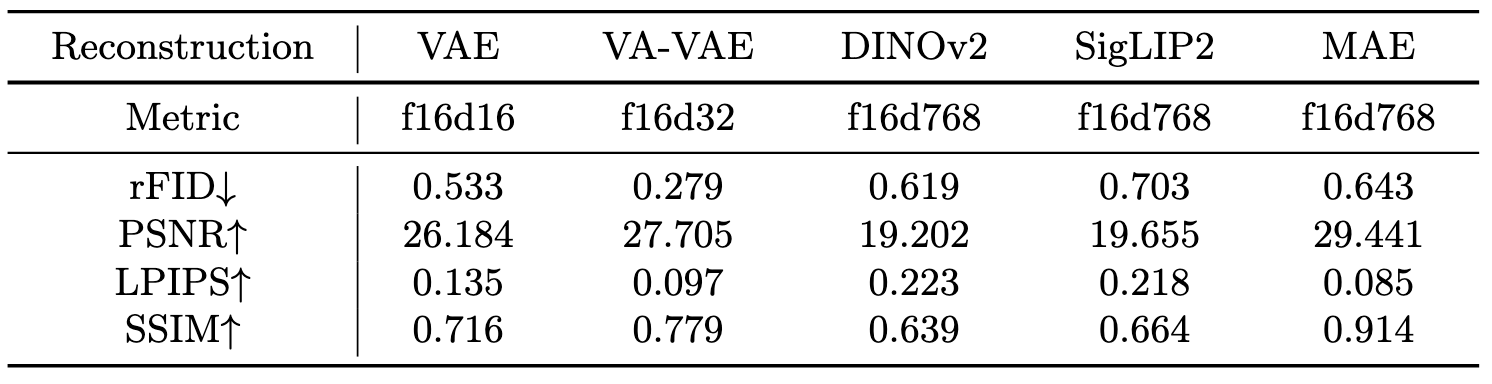

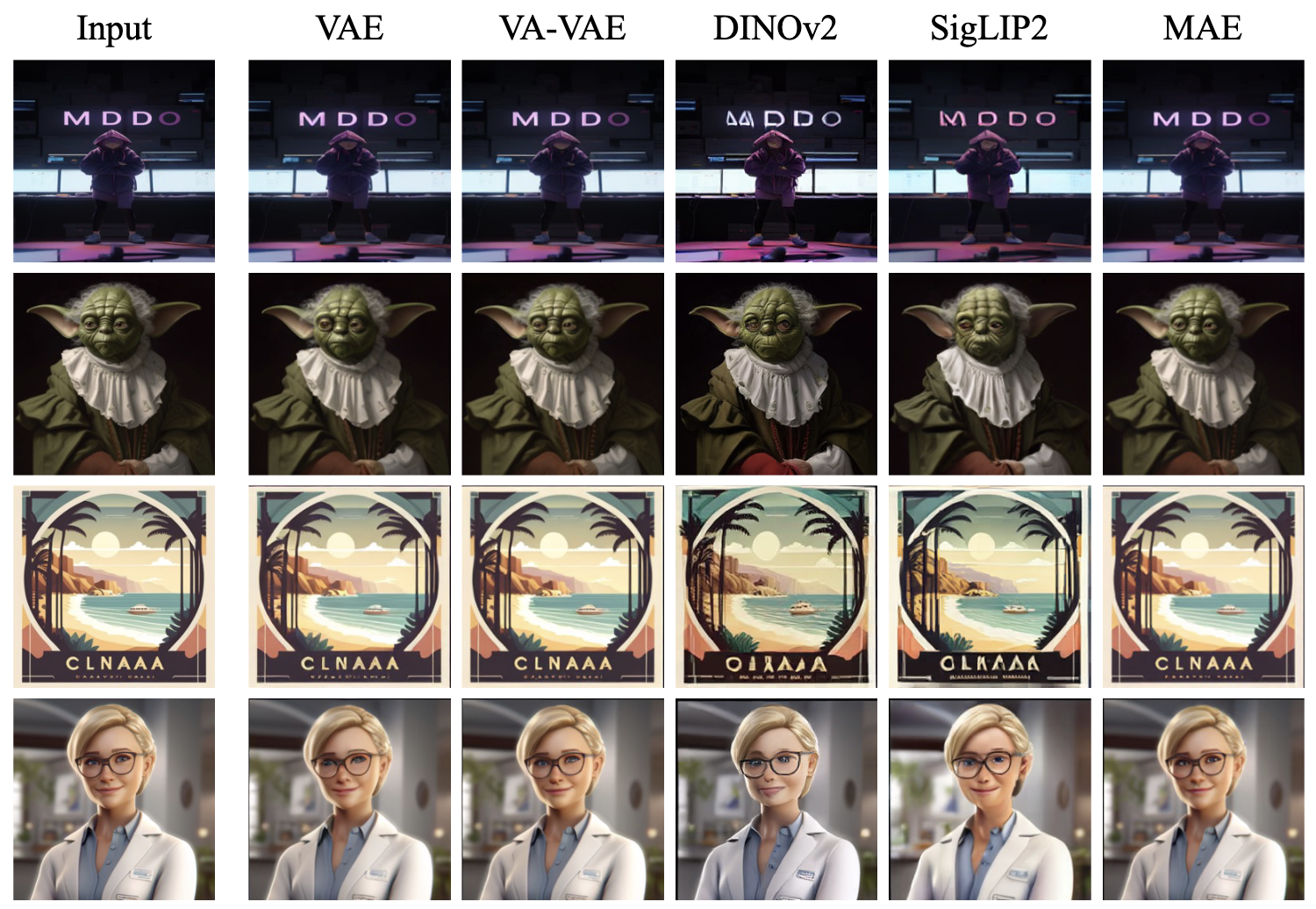

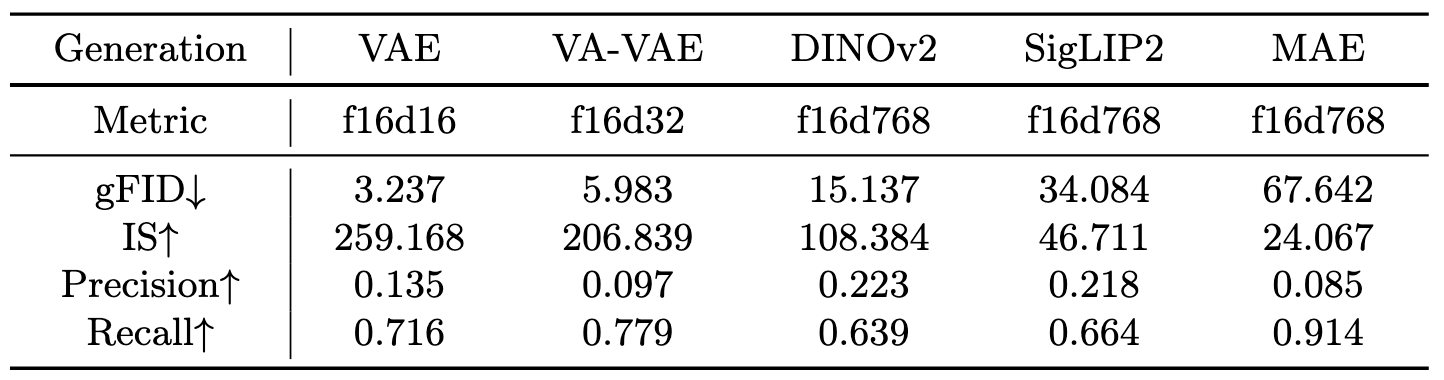

The latent space of generative modeling is long dominated by the VAE encoder. The latents from the pretrained representation encoders (e.g., DINO, SigLIP, MAE) are previously considered inappropriate for generative modeling. Recently, RAE method lights the hope and reveals that the representation autoencoder can also achieve competitive performance as the VAE encoder. However, the integration of representation autoencoder into continuous autoregressive (AR) models, remains largely unexplored.

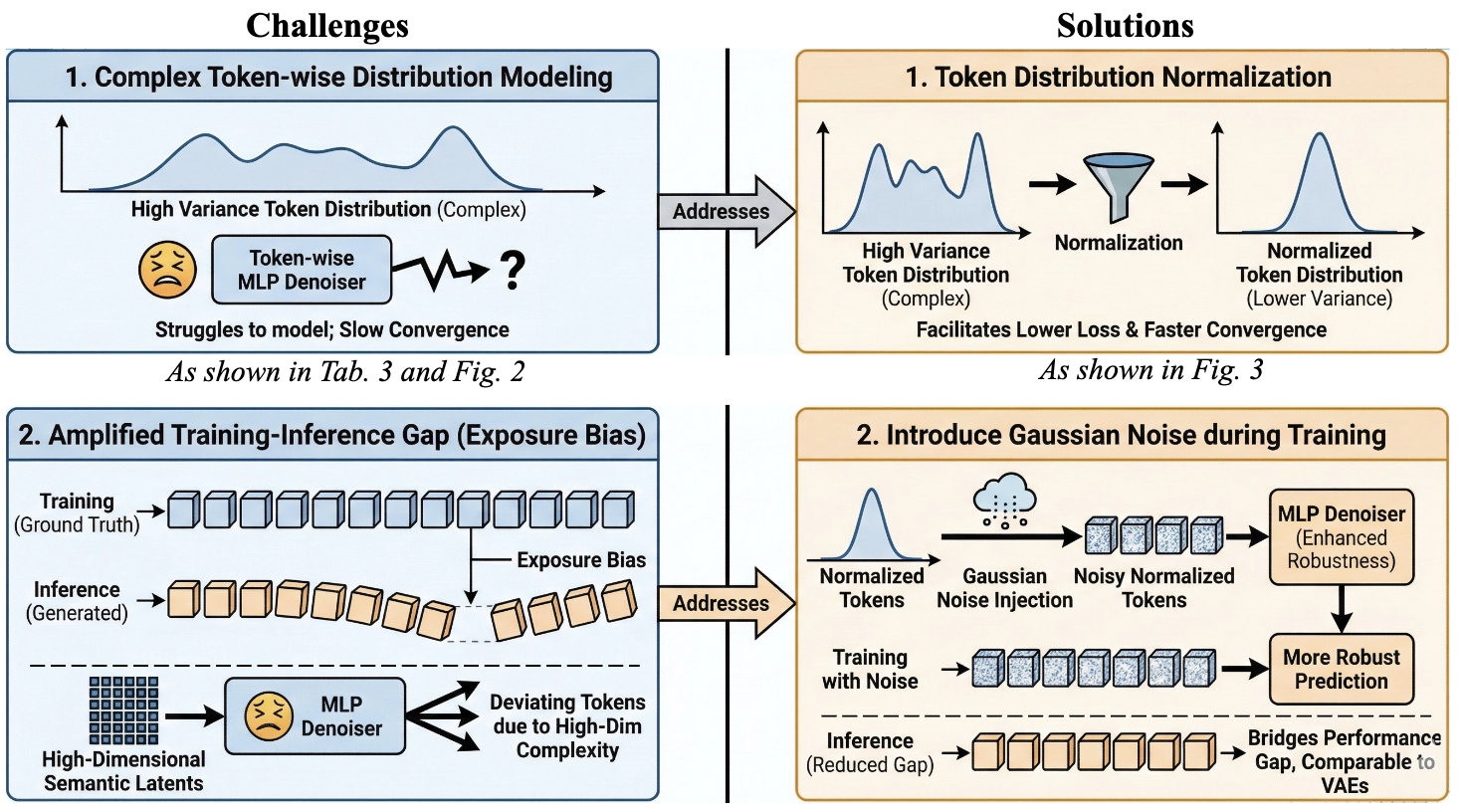

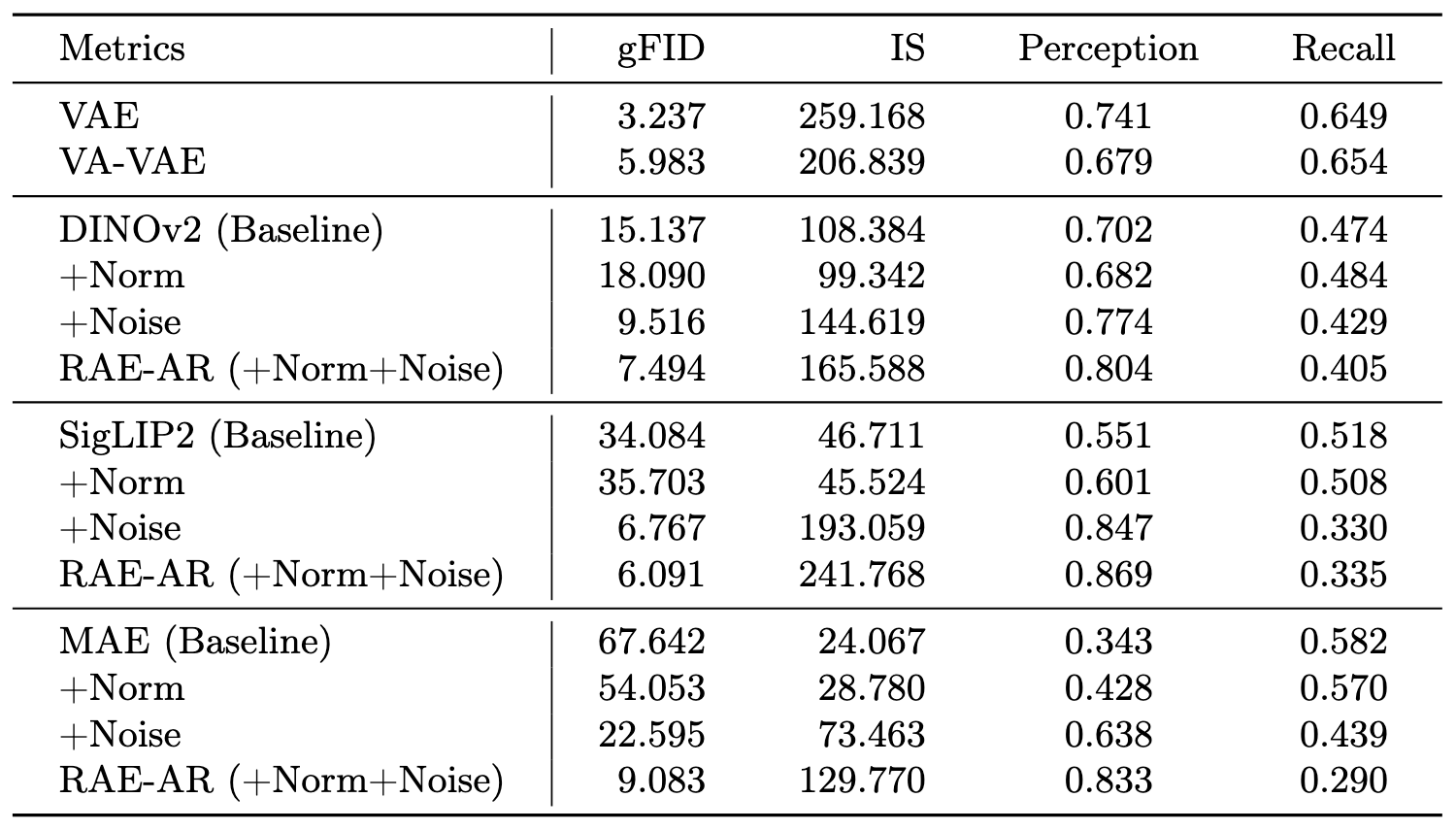

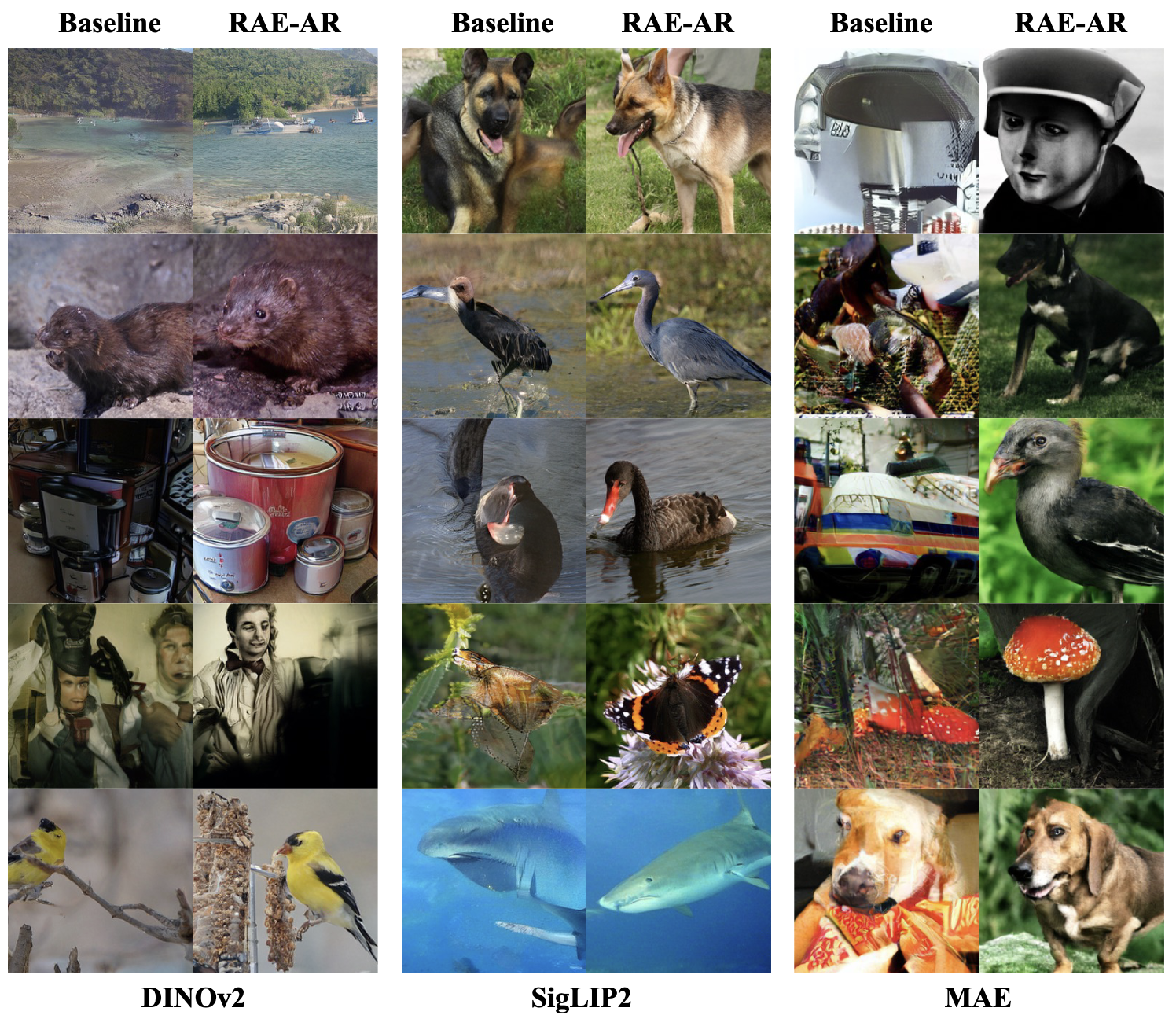

In this work, we investigate the challenges of employing high-dimensional representation autoencoders within the AR paradigm, denoted as RAE-AR. We focus on the unique properties of AR models and identify two primary hurdles: complex token-wise distribution modeling and the high-dimensionality amplified training-inference gap (exposure bias). To address these, we introduce token simplification via distribution normalization to ease modeling difficulty and improve convergence. Furthermore, we enhance prediction robustness by incorporating Gaussian noise injection during training to mitigate exposure bias. Our empirical results demonstrate that these modifications substantially bridge the performance gap, enabling representation autoencoder to achieve results comparable to traditional VAEs on AR models. This work paves the way for a more unified architecture across visual understanding and generative modeling.